Google blog

Greg Corrado

Head of Health AI

Mar 24, 2022

Over the years, teams across Google have focused on how technology — specifically artificial intelligence and hardware innovations — can improve access to high-quality, equitable healthcare across the globe.

Accessing the right healthcare can be challenging depending on where people live and whether local caregivers have specialized equipment or training for tasks like disease screening.

To help, Google Health has expanded its research and applications to focus on improving the care clinicians provide and allow care to happen outside hospitals and doctor’s offices.

Today, at our Google Health event The Check Up, we’re sharing new areas of AI-related research and development and how we’re providing clinicians with easy-to-use tools to help them better care for patients.

Here’s a look at some of those updates.

- Smartphone cameras’ potential to protect cardiovascular health and preserve eyesight

- Recording and translating heart sounds with smartphones

- Partnering with Northwestern Medicine to apply AI to improve maternal health

Smartphone cameras’ potential to protect cardiovascular health and preserve eyesight

One of our earliest Health AI projects, ARDA, aims to help address screenings for diabetic retinopathy — a complication of diabetes that, if undiagnosed and untreated, can cause blindness.

Today, we screen 350 patients daily, resulting in close to 100,000 patients screened to date. We recently completed a prospective study with the Thailand national screening program that further shows ARDA is accurate and capable of being deployed safely across multiple regions to support more accessible eye screenings.

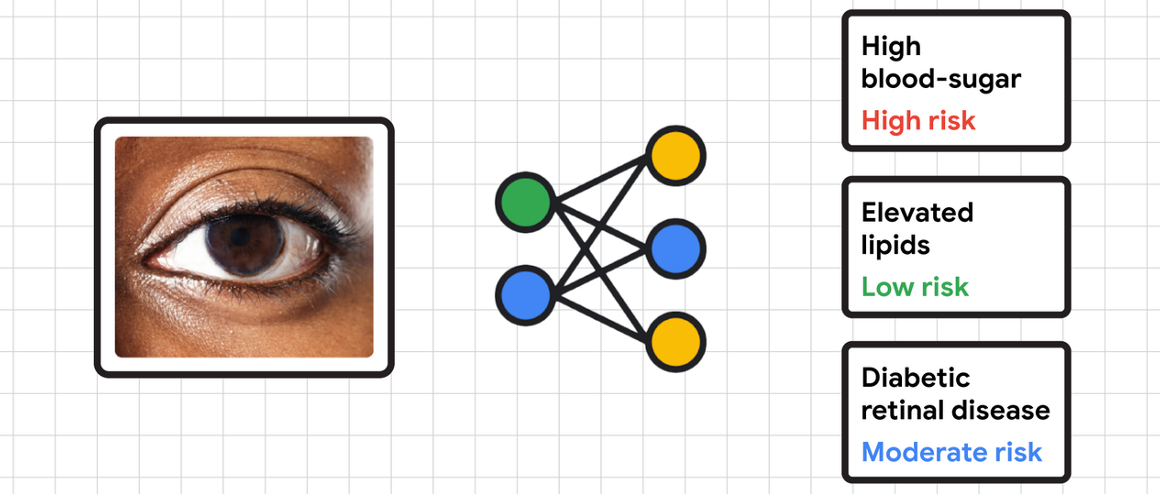

In addition to diabetic eye disease, we’ve previously also shown how photos of eyes’ interiors (or fundus) can reveal cardiovascular risk factors, such as high blood sugar and cholesterol levels, with assistance from deep learning. Our recent research tackles detecting diabetes-related diseases from photos of the exterior of the eye, using existing tabletop cameras in clinics. Given the early promising results, we’re looking forward to clinical research with partners, including EyePACS and Chang Gung Memorial Hospital (CGMH), to investigate if photos from smartphone cameras can help detect diabetes and non-diabetes diseases from external eye photos as well. While this is in the early stages of research and development, our engineers and scientists envision a future where people, with the help of their doctors, can better understand and make decisions about health conditions from their own homes.

Recording and translating heart sounds with smartphones

We’ve previously shared how mobile sensors combined with machine learning can democratize health metrics and give people insights into daily health and wellness. Our feature that allows you to measure your heart rate and respiratory rate with your phone’s camera is now available on over 100 models of Android devices, as well as iOS devices. Our manuscript describing the prospective validation study has been accepted for publication.

Today, we’re sharing a new area of research that explores how a smartphone’s built-in microphones could record heart sounds when placed over the chest. Listening to someone’s heart and lungs with a stethoscope, known as auscultation, is a critical part of a physical exam. It can help clinicians detect heart valve disorders, such as aortic stenosis which is important to detect early. Screening for aortic stenosis typically requires specialized equipment, like a stethoscope or an ultrasound, and an in-person assessment.

Our latest research investigates whether a smartphone can detect heartbeats and murmurs. We’re currently in the early stages of clinical study testing, but we hope that our work can empower people to use the smartphone as an additional tool for accessible health evaluation.

Partnering with Northwestern Medicine to apply AI to improve maternal health

Ultrasound is a noninvasive diagnostic imaging method that uses high-frequency sound waves to create real-time pictures or videos of internal organs or other tissues, such as blood vessels and fetuses.

Research shows that ultrasound is safe for use in prenatal care and effective in identifying issues early in pregnancy. However, more than half of all birthing parents in low-to-middle-income countries don’t receive ultrasounds, in part due to a shortage of expertise in reading ultrasounds. We believe that Google’s expertise in machine learning can help solve this and allow for healthier pregnancies and better outcomes for parents and babies.

We are working on foundational, open-access research studies that validate the use of AI to help providers conduct ultrasounds and perform assessments. We’re excited to partner with Northwestern Medicine to further develop and test these models to be more generalizable across different levels of experience and technologies. With more automated and accurate evaluations of maternal and fetal health risks, we hope to lower barriers and help people get timely care in the right settings.

Originally published at https://blog.google on March 24, 2022.